UCU is concerned about the use and possible abuse of Module Evaluation survey data during Appraisal meetings. We have received reports that some tutors have been challenged over low overall ‘quality’ ratings, and think this is inappropriate given our concerns over the quality and validity of that data.

Southampton UCU’s win-win solution

Further down this page we set out the reasons why UCU thinks the current end-of-module evaluations are of limited value and validity. In line with the University’s strategy of ‘Simply Better’ we propose that all tutors carry out their own mid-module evaluations. which:

- are carried out in class to get a good response rate;

- do not take significant time or effort to carry out;

- provide valuable feedback that can improve the module;

- can directly influence the current cohort’s experience;

- can be used to challenge the validity of poor end-of-module survey data.

Some faculties already mandate mid-module evaluations, so this is aligned with action that the University needs to take to improve our students’ learning experience and the feedback they give via the NSS. The Students Union is also fully behind this proposal.

Details of how you can run these mid-module evaluations.

The benefits of Mid-Module Evaluation

We all want feedback from our students that will help us develop our teaching and give them the best possible learning experience within the time and resources available. The questions asked in the end-of-module survey usually fail to provide that, with their reliance on Likert scales.

Our proposal is to start by asking students to identify the best features of the module; what did they like, and what worked well? It is good to receive recognition for the hard work put into designing and running modules, especially any recent improvements made. Data of this sort can be used to support the sharing of good practice and educational innovation with colleagues.

The second question asks how the module could be improved, which demands more engagement form students than simply asking what they didn’t like. It also taps into their creativity and they may suggest ideas that could significantly improve the module. It is essential that the tutor responds to this feedback quickly; perhaps by email, a Blackboard announcement or a Panopto video. In general you should respond to common themes rather than individual comments, either explaining what action will be taken (immediately or next year?), acknowledging good suggestions, or giving reasons why changes asked for cannot be made.

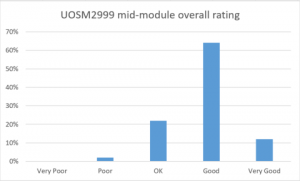

The third question does ask students to rate the module on a five-point scale, but each point has a description: Very Good, Good, OK, Poor, Very Poor. Does this match the end-of-module quality question? Probably not, because that just uses numbers and who knows what individual students actually mean when they rate a course as 3 ?

Note: I originally proposed that the scale went Outstanding, Very Good, Good, Poor, Very Poor – but it was pointed out to me that this might be ‘training’ our students to rate Good courses as a 3… which would be very bad for us when they come to fill in their NSS scores and anything that counts for subject-level TEF. But we still don’t want to have modules which the majority of students think are merely ‘OK’.

We would expect this data to be interpreted using a graph rather than a meaningless average – after all, you can’t average descriptions! The key point here is that by conducting this survey in class you should get a high response rate and a realistic view of student satisfaction.

Finally, there is an opportunity for self-evaluation, with students reflecting on their engagement with the module. We are suggesting a question style that looks like one question but actually gathers a wide range of data:

Which of these statements about this module do you agree with? (choose as many as apply)

O I am working hard to get a good grade

O I am interested in the topics covered so far

O I have developed my study skills and/or subject skills

O I am confident that I will achieve the learning outcomes

O I am keeping up with my independent study

O I know I should be working harder

These are only suggestions, and we hope that a standard set can be agreed.

Serious problems with Module Evaluation data

Perhaps the key problem is the poor response rate by students to end-of-module online surveys; typically no more than 25%, which makes the results for smaller cohorts statistically unreliable. The surveys ask for student input at a time when they are preparing for their exams, and they know that their responses will have no impact on their own learning experience. Each module survey has lots of questions and responding thoughtfully to the surveys for every module taken that semester could easily take an hour.

Low response rates also raise the impact of bias; individual student scores can have a significant impact on average ratings. For example if 10 students respond from a cohort of 40, and three of those are unhappy about their marks, then you can see the problem with average scores. It is quite possible that most of the 30 students who did not respond were very happy with the course, but their views do not count in the survey. And of course unhappy students are more motivated to respond since they have something to complain about.

Research has also found significant gender and racial bias in module survey results – and a 2014 meta-analysis of 20,000 evaluations found that “in particular male students evaluate female teachers worse than male teachers. The bias is largest for junior women…”

Finally, there are unpopular modules (stats, anyone?) or conceptually difficult modules that always seem to get low overall satisfaction scores – and someone has to teach them. There are also valid educational points about modules that aim to challenge students, require serious hard work or force them out of their comfort zones. Conversely there are worries about tutors spoon-feeding students in an attempt to get good feedback scores.

One more thing – any statistician will tell you that you shouldn’t average Likert scales, and yet we continue to use ‘average module scores’. The only mandatory question specified in the University’s Module Survey Policy is “Please rate the overall quality of this module” ranging from 5 (highest) to 1 (lowest). There is no indication or guidance on what these numbers represent; for example is 5 ‘exceptional’ or just ‘very good’? Does 3 mean the module is ‘normal, average, acceptable’? And how do students interpret the ‘quality’ of a module in any case?

Concerns about data protection

Following a failed project in 2015 to introduce EvaSys, a centralised Module Evaluation system, the Module Survey policy allows faculties to use whatever method and technology they choose.

“Faculties should consider, when making the arrangements, the best way to conduct the survey in order to achieve a good response rate and to obtain reliable, high quality data which can be used as the basis for decisions about future module or programme enhancements.”

Many have chosen to use the University’s own iSurvey, while others have opted for externally-hosted commercial solutions such as SurveyMonkey. The Module Survey policy states that “Should a Faculty wish to use an externally owned system they should contact QSAT (qsa@soton.ac.uk) for confirmation that the system has been judged DPA compliant by Legal Services.” but QSAT have confirmed that no requests of this type have been received. This is of particular concern given the imminent arrival of the GDPR (General Data Protection Regulation) which can impose crippling fines on organisations and businesses that fail to protect personal data. Some module surveys include data about the performance of individual named lecturers, so fall under the GDPR’s protection.